I went to MakerFaire at the NY Hall of Science, Queens, NY on September 17th and 18th, and took plenty of photos. Like last year, the location was the site of the 1964 World's Fair. Even though I grew up pretty close to New York, I didn't get to see the World's Fair as a child, so I'm glad to have these opportunities to see what's left of it.

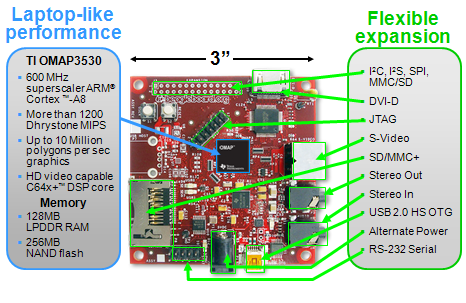

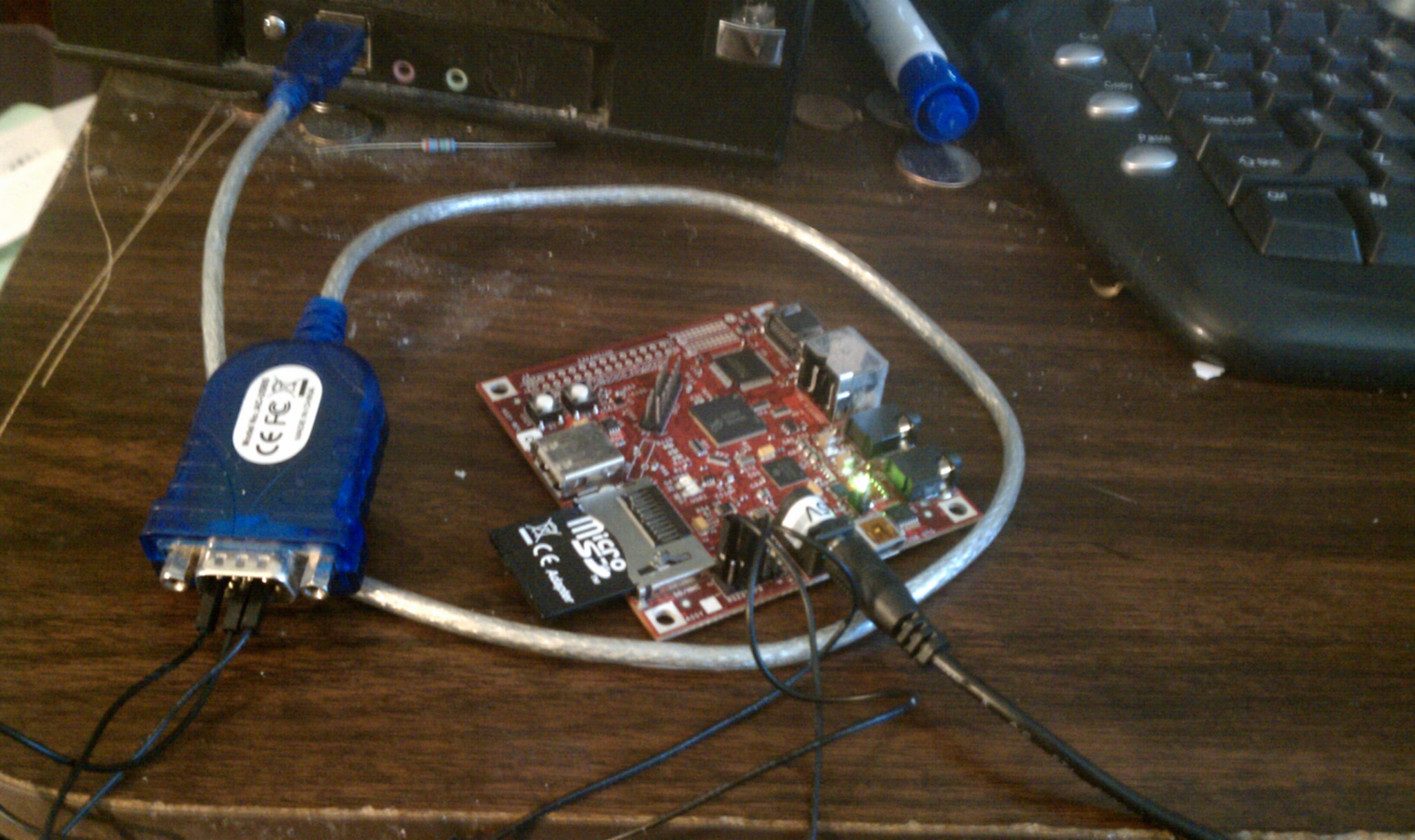

One thing I found interesting was that in addition to the expected Arduino stuff (which has the full weight of O'Reilly Publishing behind it), there were a good number of boards with more advanced microcontrollers, particularly ARM Cortex-M3 controllers. This interests me because with their larger address spaces and fuller feature sets, ARM processors can run Linux OSes or Python interpreters or other big pieces of code beyond the itty-bitty programs that will fit on an Arduino. Teho Labs had a nice line of Cortex M3 boards. They shared space with Dangerous Prototypes who were showing off their Web Platform board.

As was true last year, there were lots of 3D printers and CNC milling machines. My impression this year was that a much larger percentage of them were hobbyist efforts rather than high-end commercial projects. I think that's a good thing. There seems to me to be a maturing of the 3D printer hobbyist effort in general, and the gradual emergence of more small businesses like Bre Pettis's Makerbot. The hard technical challenges (the big one being getting the extruder nozzle to work just right) have pretty much been identified now.

As I looked at some of the products, which have been improving in resolution, it occurred to me that an interesting approach would be, instead of going with increasingly fine nozzles, to use a coarse nozzle to place a slightly oversized drop of plastic, let that drop cool and harden, and then bring in a milling tool to shape it. This would mean moving back and forth frequently between the extruder nozzle and the milling tool, so it would need some tinkering and might not end up being an improvement.

There were lots of other tools, things on display, and cool stuff to see. I was really impressed with an elegant (if low-res) volumetric display called Lumarca. Essentially the guy uses a projector to project colors onto lengths of monofilament fishing line in the viewing volume, and by carefully controlling the projected image, he individually controls what colors appear along each length of monofilament.

There were a few interesting vehicles, like motorized skateboards and a Segway clone. Those were fun.

One thing I found interesting was that in addition to the expected Arduino stuff (which has the full weight of O'Reilly Publishing behind it), there were a good number of boards with more advanced microcontrollers, particularly ARM Cortex-M3 controllers. This interests me because with their larger address spaces and fuller feature sets, ARM processors can run Linux OSes or Python interpreters or other big pieces of code beyond the itty-bitty programs that will fit on an Arduino. Teho Labs had a nice line of Cortex M3 boards. They shared space with Dangerous Prototypes who were showing off their Web Platform board.

I did get pretty tired and sore walking around so much, and needed some Advil. But it was definitely worthwhile. Maybe I'll have some project next year so that I can have a booth of my own.http://www.arm.com/products/processors/cortex-m/cortex-m3.php